Transformers4Rec bridges the gap between NLP transformers and recommender systems. You get access to 64+ HuggingFace transformer architectures — XLNet, BERT, GPT-2, T5 — and train them directly on interaction sequences: items viewed, products browsed, content consumed.

Why I starred it

Recommendation systems have a representation problem. Most frameworks only accept sequences of item IDs. That's fine for basic collaborative filtering, but real session data is richer: you have timestamps, price signals, category hierarchies, continuous features like dwell time. NLP transformers were never designed for this — they expect token sequences, period.

Transformers4Rec solves this with a schema-driven input layer that projects heterogeneous tabular data into the dense embedding space a transformer expects. Define a schema, call TabularSequenceFeatures.from_schema(), and the library handles embedding tables, normalization, and projection — no manual plumbing per feature. That's the design decision worth understanding here.

How it works

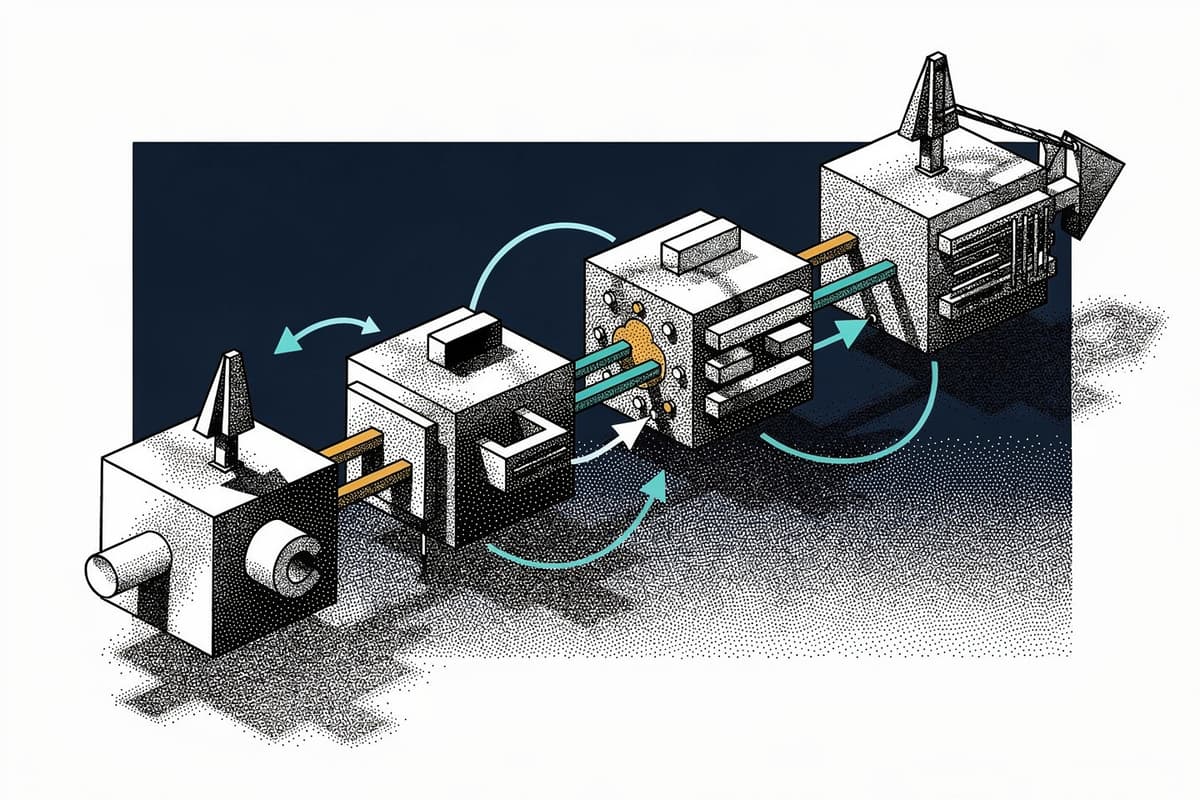

The architecture has three layers: input processing, transformer body, and prediction head.

Input layer lives in transformers4rec/torch/features/sequence.py. The key class is TabularSequenceFeatures, which inherits from TabularFeatures and extends it to produce 3D tensors (batch × sequence × features). When you pass a schema, it auto-builds embedding tables for categorical columns and projection layers for continuous ones via SequenceEmbeddingFeatures.table_to_embedding_module():

def table_to_embedding_module(self, table: embedding.TableConfig) -> torch.nn.Embedding:

embedding_table = torch.nn.Embedding(

table.vocabulary_size, table.dim, padding_idx=self.padding_idx

)

if table.initializer is not None:

table.initializer(embedding_table.weight)

return embedding_tableNothing magic, but the automation of wiring these up from a schema file is what saves hours of boilerplate across a real feature set.

Transformer body is in transformers4rec/torch/block/transformer.py. TransformerBlock wraps any HuggingFace PreTrainedModel or PretrainedConfig. If you pass a T4RecConfig (their config abstraction from transformers4rec/config/transformer.py), it instantiates the HF model via transformers.MODEL_MAPPING. Different architectures need different input preparation — GPT-2 requires a triangular causal mask shaped as (n_layer, 1, 1, seq_len, seq_len), which GPT2Prepare.forward() builds on the fly. XLNet and BERT have their own variants. The TRANSFORMER_TO_PREPARE dict on TransformerBlock maps model classes to their prepare modules.

Masking is where the RecSys adaptation gets interesting. Standard LM training in NLP assumes you're masking tokens for a language objective. Here, you're masking item interactions — the goal is next-item prediction, not next-word. The MaskSequence base class in masking.py supports four strategies: causal LM (CLM), masked LM (MLM), permutation LM (PLM), and replacement token detection (RTD). Causal masking predicts the next item from all preceding items in a session. MLM masks random items and forces the model to reconstruct them — effectively BERT-style training on interaction sequences.

The T4RecConfig abstraction in transformers4rec/config/transformer.py also exposes a clean to_torch_model() shortcut that wires input features, transformer body, and prediction tasks into a single Model object — useful when you don't want to build the SequentialBlock manually.

Prediction tasks in transformers4rec/torch/model/prediction_task.py include NextItemPredictionTask, BinaryClassificationTask, and regression variants. The binary classification task uses a BinaryClassificationPrepareBlock that builds the output head lazily via build(input_size) — so it doesn't need to know dimensions until after the rest of the network is constructed. This deferred build pattern appears throughout the codebase wherever output dimensions depend on upstream layers.

Using it

Install with the Merlin dataloader (GPU-accelerated, strongly recommended):

pip install transformers4rec[nvtabular]Minimal XLNet next-item prediction setup:

from transformers4rec import torch as tr

schema = tr.data.tabular_sequence_testing_data.schema

d_model = 64

input_module = tr.TabularSequenceFeatures.from_schema(

schema,

max_sequence_length=20,

continuous_projection=d_model,

aggregation="concat",

masking="causal",

)

transformer_config = tr.XLNetConfig.build(

d_model=d_model, n_head=4, n_layer=2, total_seq_length=20

)

body = tr.SequentialBlock(

input_module,

tr.MLPBlock([d_model]),

tr.TransformerBlock(transformer_config, masking=input_module.masking),

)

head = tr.Head(

body,

tr.NextItemPredictionTask(

weight_tying=True,

metrics=[tr.ranking_metric.NDCGAt(top_ks=[20, 40], labels_onehot=True)],

),

inputs=input_module,

)

model = tr.Model(head)Switching from next-item prediction to binary classification (e.g., click prediction) means swapping masking="causal" for masking=None and replacing NextItemPredictionTask with BinaryClassificationTask("click"). The rest of the pipeline is identical.

For production, the library integrates with NVTabular for GPU-accelerated preprocessing and Triton Inference Server for serving. The schema file exports from NVTabular and is consumed directly by TabularSequenceFeatures.from_schema() — one format for the whole pipeline.

Rough edges

The last meaningful commit before March 2024 was over a year prior. Recent commits in early 2026 are isolated bug fixes to a scaler load route — nothing architectural. The project is technically alive but not actively developed.

The dependency surface is heavy. You're pulling in transformers, merlin-core, nvtabular, RAPIDS cuDF, and the full PyTorch stack. Getting this running without a CUDA-equipped machine means using their Docker containers, which adds friction to evaluation.

Documentation is decent for the happy path but thin on edge cases. The masking strategies are documented at class level but there's no guide explaining when to use CLM vs MLM for your specific recommendation task — that requires reading the ACM RecSys '21 paper they reference.

Integration tests exist under tests/integration/ but unit test coverage in tests/unit/ is sparse for the core feature and masking modules where you'd most want it.

Bottom line

If you're running session-based recommendation on GPU infrastructure and want to evaluate multiple transformer architectures without rewriting your input pipeline for each, Transformers4Rec removes a lot of that work. The schema-driven input layer and swappable transformer body are the two decisions that make it useful. That said, the maintenance posture means you're betting on a mostly-stable library, not an actively evolving one — factor that in before building a production system around it.