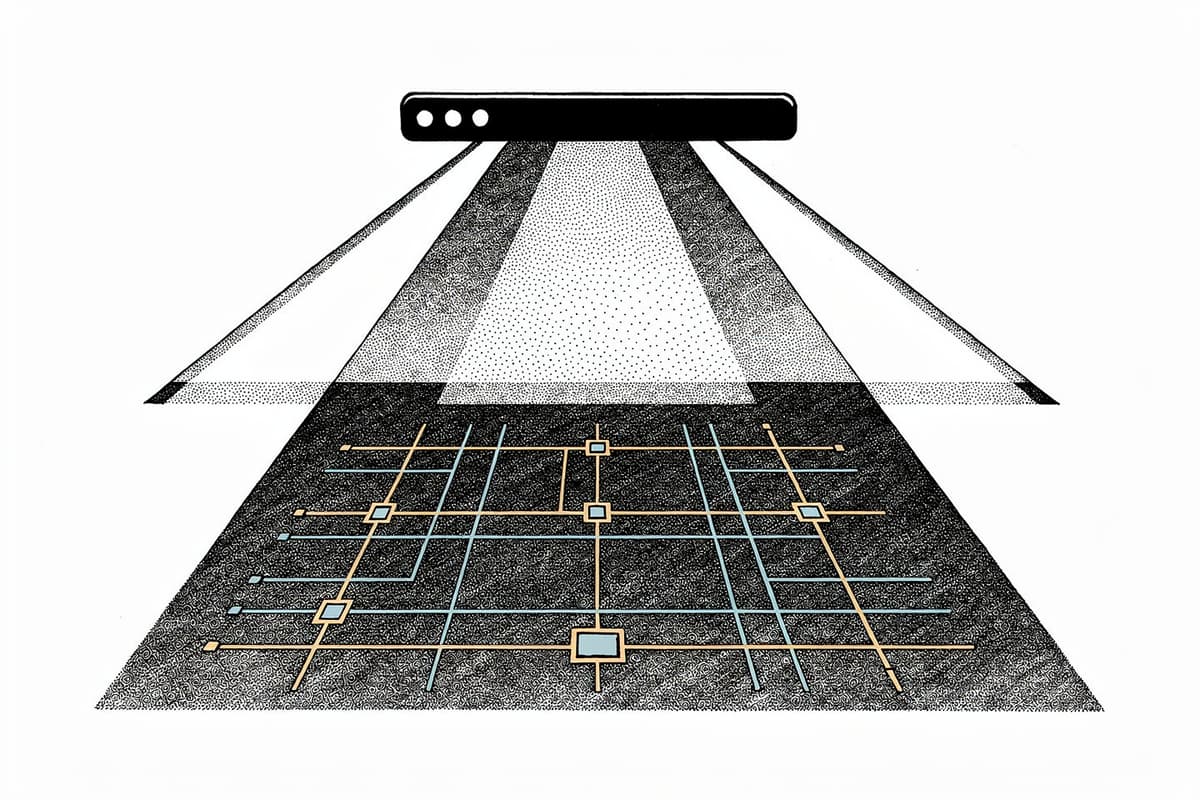

Dayflow is a native macOS app that watches your screen, sends frames to an AI provider, and produces a timestamped timeline of what you did — without continuous screen-recording indicators or data leaving your machine by default.

Why I starred it

Most time trackers work at the app-window level. They know you had VS Code open for three hours; they have no idea whether you were shipping code or reading Hacker News inside it. Dayflow goes one level deeper: it uses AI to describe what's actually on screen, then groups those descriptions into labeled activity cards.

What caught my attention was the architecture: it's not cloud-dependent. You can run it entirely local with Ollama or LM Studio, use a Gemini API key, or pipe frames through the Claude Code or Codex CLIs using your existing subscription. The privacy story is configurable at the infrastructure level, not just through a checkbox in a settings panel.

How it works

The entry point is ScreenRecorder.swift, which was rewritten to use SCScreenshotManager for periodic screenshots instead of a continuous video capture stream. That's a meaningful choice: it removes the persistent screen-recording indicator in the menu bar while maintaining the same data throughput.

// ScreenRecorder.swift

enum ScreenshotConfig {

static var interval: TimeInterval {

let stored = UserDefaults.standard.double(forKey: "screenshotIntervalSeconds")

return stored > 0 ? stored : 10.0 // Default: 10 seconds

}

}

private enum Config {

static let targetHeight: CGFloat = 1080

static let jpegQuality: CGFloat = 0.85

}Screenshots are scaled to 1080p at 85% JPEG quality — a deliberate balance between context legibility and storage footprint.

The recorder runs a proper state machine with four states (idle, starting, capturing, paused) rather than just a boolean flag. This matters because the app needs to handle system sleep, screen lock, and deeplink-triggered pause/resume without race conditions:

private enum RecorderState: Equatable {

case idle

case starting

case capturing

case paused

}Screenshots get batched, then handed to AnalysisManager.swift, which owns the coordination between the storage layer (SQLite via GRDB) and whichever LLM provider is configured. The analysis timer fires every 60 seconds; it pulls unprocessed batches from the last 24 hours and routes them through LLMService.

The interesting part of LLMService.swift is how it abstracts provider selection into two separate action structs — BatchProviderActions for the screenshot→timeline pipeline and TextProviderActions for freeform generation:

private struct BatchProviderActions {

let transcribeScreenshots:

([Screenshot], Date, Int64?) async throws -> (observations: [Observation], log: LLMCall)

let generateActivityCards:

([Observation], ActivityGenerationContext, Int64?) async throws -> (

cards: [ActivityCardData], log: LLMCall

)

}This abstraction is what makes multi-provider support clean. Swapping from Gemini to Ollama doesn't change the pipeline — only which closures populate those structs.

The LLM call count per batch varies dramatically by provider. Gemini can ingest video natively — two calls per batch. Local models have to describe each frame individually, then merge — 33+ calls for the same window. The ChatGPT/Claude path batches frames 10 at a time, landing at 4–6 calls. That's not just a performance difference; it's a cost and latency story if you're deciding which provider to use.

The ChatCLIRunner.swift is worth a look if you want to understand how it shells out to Claude Code or Codex. It uses PTY allocation and spawns processes through the user's login shell — same trick as getpwuid(getuid()) to find the actual shell path — so it picks up tools installed via Homebrew, nvm, or cargo without needing manual PATH configuration:

static var userLoginShell: URL {

if let entry = getpwuid(getuid()),

let shellPath = String(validatingUTF8: entry.pointee.pw_shell)

{

return URL(fileURLWithPath: shellPath)

}

return URL(fileURLWithPath: "/bin/zsh")

}Storage lives at ~/Library/Application Support/Dayflow/ in a SQLite database (chunks.sqlite) with configurable limits between 1GB and 20GB.

Using it

Install via Homebrew:

brew install --cask dayflowOr download the DMG directly from GitHub Releases. Grant Screen & System Audio Recording permission in System Settings, pick an AI provider, and it starts building your timeline.

Automation is available through a URL scheme:

open dayflow://start-recording

open dayflow://stop-recording

These work from Shortcuts, Alfred, or any hotkey launcher. State changes triggered via deeplink are logged separately in analytics — a small but considerate debugging detail.

Rough edges

Test coverage is thin: there's exactly one test file (TimeParsingTests.swift) with four cases covering the parseTimeHMMA utility. The entire capture, analysis, and LLM pipeline has no automated tests. That's a meaningful gap for an app handling screen data.

Local model quality is a known tradeoff — the README is upfront that Ollama/LM Studio can underperform on complex summarization, especially compared to frontier models. Running 33+ inference calls per batch locally is also GPU-heavy and will drain an M-series MacBook significantly on battery.

The Journal feature is still in beta with access codes, and the Dashboard feature requires ChatGPT Plus or Claude Pro with the respective CLIs installed — not quite plug-and-play for most users.

Bottom line

If you want context-aware time tracking that doesn't funnel your screen through someone else's servers, Dayflow is the most thoughtful implementation I've seen. The multi-provider architecture is the differentiator — use Gemini for quality, Ollama for full offline privacy, or Claude Code if you already pay for a subscription.