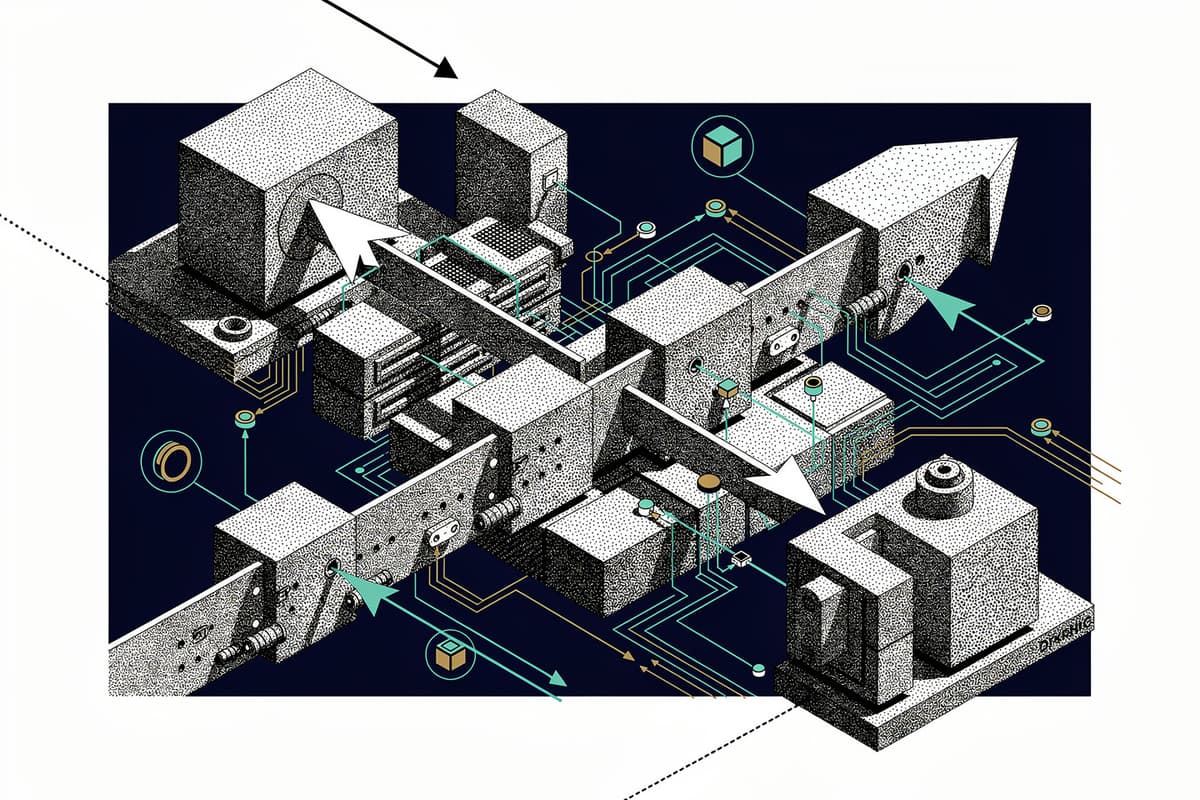

Sim is a visual canvas for composing AI agent workflows. You connect blocks — agents, tools, conditions, loops, parallel branches — and the execution engine runs the graph in the right order, resolving inputs between blocks as it goes.

Why I starred it

The space is crowded. n8n, Flowise, Langflow, Dify all exist. What made me stop on Sim was the execution model. Most drag-and-drop agent tools are shallow: the visual layer is a prettier interface over sequential API calls. Sim took a different approach and built a proper DAG executor from scratch, with first-class support for parallel fan-out and human-in-the-loop pauses.

27k stars in relatively short time, actively maintained, and the codebase is organized well enough to actually understand.

How it works

The entry point is apps/sim/executor/index.ts, which exports a single DAGExecutor class from executor/execution/executor.ts. That class accepts a SerializedWorkflow — a JSON representation of nodes and edges — and compiles it into a DAG before execution.

The DAGBuilder in executor/dag/builder.ts is where the graph gets constructed. It runs five sequential constructors: path, loop, parallel, node, and edge. Path construction first determines which blocks are reachable from the trigger. Then loops and parallels inject sentinel nodes — synthetic start/end markers — so the engine can treat subflows as atomic units:

// executor/dag/builder.ts

build(workflow: SerializedWorkflow, options: DAGBuildOptions = {}): DAG {

const reachableBlocks = this.pathConstructor.execute(workflow, triggerBlockId, includeAllBlocks)

this.loopConstructor.execute(dag, reachableBlocks)

this.parallelConstructor.execute(dag, reachableBlocks)

const { blocksInLoops, blocksInParallels } = this.nodeConstructor.execute(

workflow, dag, reachableBlocks

)

this.edgeConstructor.execute(workflow, dag, blocksInParallels, blocksInLoops, ...)

}Once the DAG is built, ExecutionEngine in executor/execution/engine.ts runs it. The loop is tight: initialize a ready queue, process blocks as their dependencies resolve, track in-flight promises, and drain. Cancellation checks hit Redis every 500ms if Redis is configured; otherwise it falls back to in-process signals.

// executor/execution/engine.ts

async run(triggerBlockId?: string): Promise<ExecutionResult> {

this.initializeQueue(triggerBlockId)

while (this.hasWork()) {

if ((await this.checkCancellation()) || this.errorFlag || this.stoppedEarlyFlag) break

await this.processQueue()

}

if (!this.cancelledFlag) {

await this.waitForAllExecutions()

}

// ...

}

The ParallelOrchestrator in executor/orchestrators/parallel.ts handles fan-out. When a parallel block fires, it resolves the distribution input (either a fixed count or a collection to distribute across branches), creates branch-scoped contexts, and tracks aggregation. When all branches complete, the sentinel node collapses and the downstream nodes become ready.

Variable resolution is its own subsystem. VariableResolver in executor/variables/resolver.ts chains five resolvers — loop, parallel, workflow, env, block — in priority order. Template expressions like {{blockId.output.value}} get resolved at block execution time, not at build time, so parallel branches get the right iteration context.

The block library in apps/sim/blocks/blocks/ is huge. I counted connectors for Airtable, Ahrefs, Asana, Athena, Apollo, Devin, Databricks, Discord — and that's just the first third of the directory. Each block is a TypeScript file exporting a schema (inputs, outputs, config) that the executor handles uniformly through a registry in executor/handlers/registry.ts.

Using it

The fastest path is npx simstudio — it pulls a Docker image and runs at localhost:3000. For self-hosting without Docker:

git clone https://github.com/simstudioai/sim.git && cd sim

bun install

bun run prepare

cp apps/sim/.env.example apps/sim/.env

cp packages/db/.env.example packages/db/.env

# set DATABASE_URL in both .env files

cd packages/db && bun run db:migrate

bun run dev:fulldev:full starts three processes: the Next.js app, a Socket.io server for realtime execution events, and a BullMQ worker for queue-backed execution. If Redis isn't configured, the worker idles and execution runs inline.

The API trigger block exposes each workflow as an HTTP endpoint. You can call it like any webhook:

curl -X POST https://your-instance/api/workflows/{id}/execute \

-H "x-api-key: YOUR_KEY" \

-d '{"input": "hello"}'Rough edges

Debug mode isn't implemented. DAGExecutor.continueExecution() logs a warning and returns an error — the docstring says "not yet implemented in the refactored executor". If you need step-through debugging, you're blocked.

The Copilot feature (natural language to workflow) runs on Sim's own servers even in self-hosted mode. You have to set a COPILOT_API_KEY from sim.ai, which means a cloud dependency for what looks like a local tool.

Test coverage is uneven. executor/orchestrators/parallel.test.ts exists. executor/dag/builder.test.ts exists. But many block connectors have no tests. The vitest config is there, so the infrastructure is set up — coverage just isn't consistent.

The 12GB RAM recommendation for Docker Compose is aggressive. On a typical dev machine this either slows everything down or runs fine — hard to say without more data.

Bottom line

If you're building internal AI agent workflows and want a visual editor with a real execution engine underneath, Sim is the most technically serious option in the open-source category. The DAG executor with parallel orchestration and human-in-the-loop pauses is genuinely well-designed. The missing debug mode and Copilot cloud dependency are the main reasons to evaluate carefully before committing.