Lingo.dev is an open-source i18n toolkit that runs LLM translation across your project's locale files. One command updates every target locale from the source. A lockfile (i18n.lock) tracks checksums so repeat runs skip unchanged strings entirely.

Why I starred it

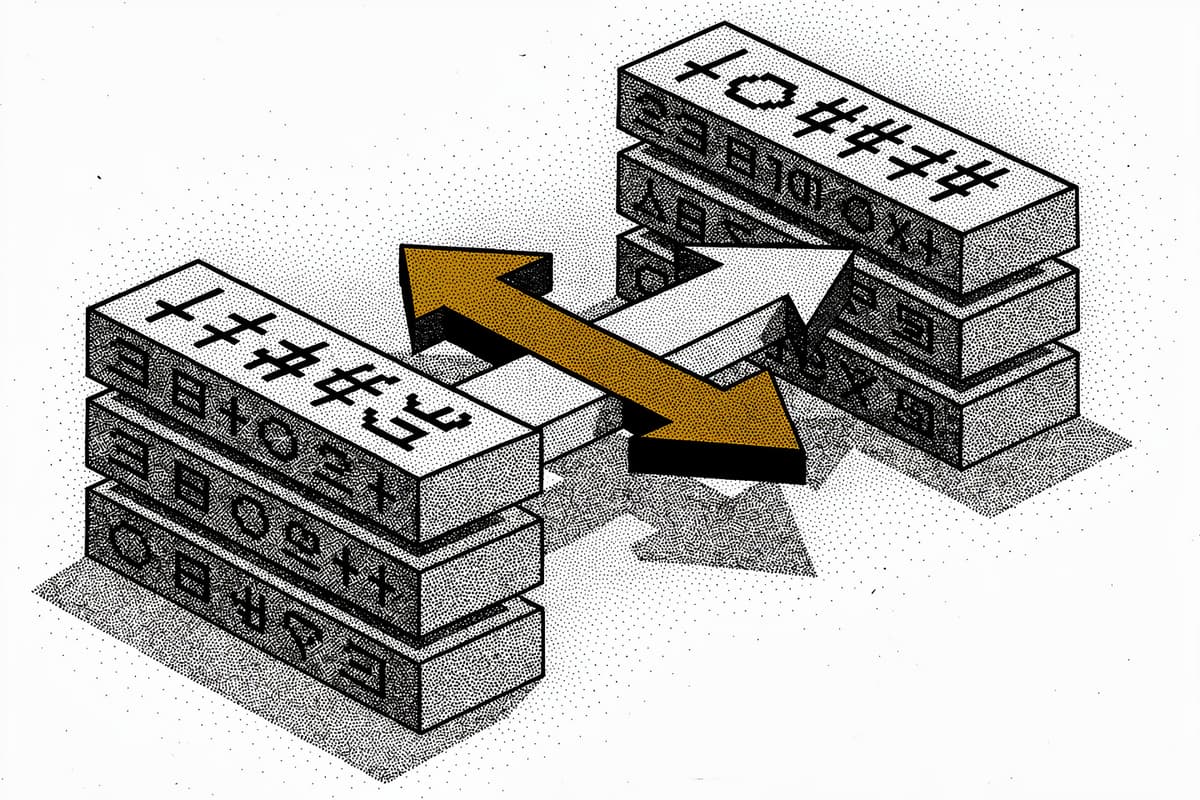

Most i18n workflows end up in one of two bad places: send files to human translators and wait days, or bolt on a hand-rolled script that calls an LLM and then breaks when a key renames. The specific engineering problem Lingo.dev is solving — delta-based translation with rename detection — is what caught my eye. It's not just "call GPT on your JSON."

The CLI also ships with CI/CD support, an MCP server for IDE-assisted setup, a React compiler plugin for build-time localization, and a runtime SDK for dynamic content. That's a wide surface area, but the core CLi execution loop is focused enough to be readable in a sitting.

How it works

The execution path starts in packages/cli/src/cli/cmd/run/execute.ts. When you run npx lingo.dev@latest run, the CLI first calls plan() to build a list of CmdRunTask objects — one per bucket/locale combination — then fans them out through p-limit with configurable concurrency.

The interesting part is in the delta processor at packages/cli/src/cli/utils/delta.ts. Before any translation happens, it loads the i18n.lock file (YAML, not JSON — avoids merge conflict noise) and computes a delta against the current source file:

// packages/cli/src/cli/utils/delta.ts

export type Delta = {

added: string[];

removed: string[];

updated: string[];

renamed: [string, string][];

hasChanges: boolean;

};

async calculateDelta(params: {

sourceData: Record<string, any>;

targetData: Record<string, any>;

checksums: Record<string, string>;

}): Promise<Delta> {

const updated = Object.keys(params.sourceData).filter(

(key) =>

md5(params.sourceData[key]) !== params.checksums[key] &&

params.checksums[key],

);

// rename detection: if a removed key's checksum matches an added key's checksum,

// it's a rename — not a new translation needed

for (const addedKey of added) {

const addedHash = md5(params.sourceData[addedKey]);

for (const removedKey of removed) {

if (params.checksums[removedKey] === addedHash) {

renamed.push([removedKey, addedKey]);

break;

}

}

}

Rename detection via MD5 checksum comparison is the non-obvious move here. If you rename button.submit to button.save without changing the English value, the CLI knows it doesn't need to retranslate — it just renames the existing translation in every locale file. That saves real money when you're translating to 10+ locales with a paid LLM provider.

Each task goes through a loader abstraction defined in packages/cli/src/cli/loaders/_types.ts:

export interface ILoaderDefinition<I, O, C> {

pull(locale: string, input: I, initCtx: C, ...): Promise<O>;

push(locale: string, data: O, originalInput: I | null, ...): Promise<I>;

pullHints?(originalInput: I): Promise<O | undefined>;

}

The pull/push separation matters for formats where multiple locales share a single file (Xcode .xcstrings, for example). The per-file IO limiter in execute.ts serializes reads and writes to those files to prevent races while still running translations concurrently:

const perFileIoLimiters = new Map<string, LimitFunction>();

const getFileIoLimiter = (bucketPathPattern: string): LimitFunction => {

if (!perFileIoLimiters.has(lockKey)) {

perFileIoLimiters.set(lockKey, pLimit(1));

}

return perFileIoLimiters.get(lockKey)!;

};

The streaming progress callback in the worker loop writes translated chunks to disk as they arrive from the LLM — you see files update incrementally rather than waiting for a full batch to complete.

The loader list is extensive: JSON, YAML, JSONC, JSON5, CSV, PO/POT, Markdown, MDX, XLIFF, Android XML, Flutter ARB, Xcode .strings/.xcstrings/.stringsdict, VTT, SRT, PHP, Twig, EJS, MJML, HTML, and TypeScript. Each one implements the same pull/push interface with format-specific parsing.

The compiler package (packages/compiler/src/index.ts) is a separate story — it's an unplugin-based build plugin that does AST transformation at compile time. It walks JSX, extracts text nodes, and generates locale dictionaries without you writing t() calls. The i18n-directive.ts uses a 'use i18n' directive to scope which components get transformed, similar to React's 'use client'. That compiler is marked deprecated in favor of a new one in the new-compiler package, which suggests active refactoring.

Using it

Setup is two commands:

npx lingo.dev@latest init

npx lingo.dev@latest runinit generates i18n.json. A minimal config targeting Spanish and French from English JSON files:

{

"$schema": "https://lingo.dev/schema/i18n.json",

"version": "1.10",

"locale": {

"source": "en",

"targets": ["es", "fr"]

},

"buckets": {

"json": {

"include": ["locales/[locale].json"]

}

}

}To use your own LLM instead of the Lingo.dev engine:

{

"provider": {

"id": "anthropic",

"model": "claude-3-5-haiku-20241022"

}

}For CI, the GitHub Action wrapper is three lines:

- name: Lingo.dev

uses: lingodotdev/lingo.dev@main

with:

api-key: ${{ secrets.LINGODOTDEV_API_KEY }}Pass pull-request: true and it opens a PR with translations instead of committing directly. Useful if you want a review step before shipping translated copy.

Rough edges

The compiler is in mid-refactor. The old @lingo.dev/_compiler package shows deprecation warnings and points to @lingo.dev/compiler, but the new compiler package doesn't have full feature parity yet — you'll hit warnings in the build output if you use it.

Bring-your-own-LLM works, but there's no way to tune the translation prompt per bucket type in i18n.json. The prompt field in the provider config is a global template. If you need different behavior for marketing copy versus UI strings versus documentation, you're configuring multiple projects.

The i18n.lock YAML file can accumulate duplicate entries if runs are interrupted — the code detects and deduplicates on load, which is pragmatic, but it means your lockfile can drift before the cleanup runs.

Test coverage is solid for loaders (each format has a .spec.ts), but the core execution path in execute.ts has no tests. The worker task logic and the streaming push behavior are tested manually or through integration, not unit tests.

Bottom line

If you're managing i18n across multiple locale files and tired of re-translating strings that haven't changed, the lockfile + delta approach here is the right design. Works well as a CLI tool pointed at flat JSON/YAML; gets more complex once you need format-specific behavior or want to control costs tightly across dozens of locales.